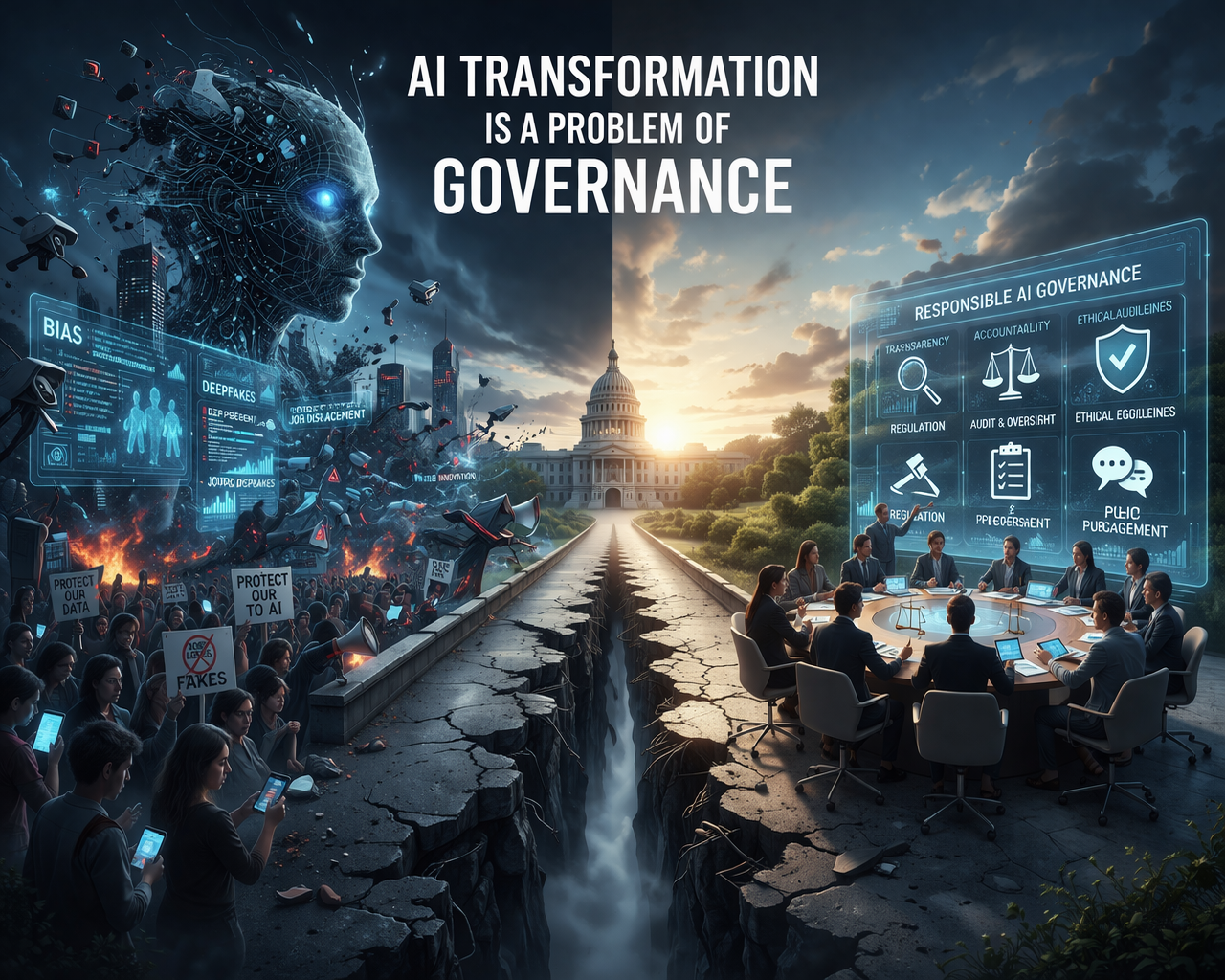

AI transformation is reshaping organizations, industries, and public institutions at an unprecedented pace. As artificial intelligence technologies integrate into decision-making, operations, and customer-facing systems, governance becomes a critical factor determining whether AI delivers value safely and ethically. AI transformation is a problem of governance because many organizations adopt AI faster than they can implement the policies, oversight mechanisms, and ethical frameworks required to manage risks effectively (According to McKinsey AI Reports 2023). Without robust governance, organizations face ethical violations, regulatory noncompliance, and operational failures.

Definition of AI Transformation

AI transformation refers to the strategic integration of artificial intelligence technologies across an organization to improve efficiency, enhance decision-making, and drive innovation. It involves implementing machine learning models, natural language processing systems, predictive analytics, and intelligent automation in both operational and strategic workflows.

| Component | Example Use Case | Benefit |

|---|---|---|

| Predictive Analytics | Forecasting demand in supply chains | Reduced inventory costs |

| NLP Chatbots | Customer service automation | 24/7 support and cost efficiency |

| Autonomous Systems | Manufacturing robotics | Increased production efficiency |

According to industry standards, AI transformation is not solely a technical challenge—it encompasses organizational change, workforce readiness, and governance alignment.

Governance in AI Context

Governance, in the AI context, refers to the framework of policies, standards, and accountability measures that ensure AI systems operate ethically, legally, and safely. AI governance involves multiple dimensions:

- Ethical oversight: Ensuring AI systems do not reinforce bias or cause harm.

- Regulatory compliance: Meeting local and international AI laws, data protection, and industry standards.

- Operational monitoring: Tracking AI performance, accuracy, and reliability.

- Strategic alignment: Ensuring AI initiatives align with organizational goals and societal expectations.

Table: AI Governance vs Traditional IT Governance

| Aspect | AI Governance | Traditional IT Governance |

|---|---|---|

| Risk Focus | Ethical, bias, societal impact | Security, uptime, compliance |

| Oversight | Multidisciplinary boards, ethics committees | IT managers, audit teams |

| Policy Needs | Continuous review due to adaptive AI | Standardized IT policies |

The adaptive and often opaque nature of AI algorithms presents unique governance challenges that traditional IT governance frameworks are not fully equipped to manage.

Why AI Transformation Challenges Governance

Rapid Technology Adoption

Organizations are implementing AI systems faster than regulatory bodies can provide guidance. As AI adoption accelerates, organizations often lack structured oversight mechanisms, resulting in potential compliance risks and ethical breaches.

- AI models may produce decisions that are not easily explainable, creating accountability gaps.

- Cross-border AI applications face conflicting regulatory frameworks, particularly around data privacy and algorithmic fairness.

Black-Box AI Models

Many AI algorithms, especially deep learning systems, operate as “black boxes,” meaning their decision-making processes are not transparent. Black-box models challenge traditional governance because:

- Decision rationales are difficult to audit.

- Bias and errors may go undetected.

- Accountability for AI-driven decisions becomes unclear.

Cross-Border Compliance Complexities

Global AI adoption exposes organizations to diverse legal and regulatory environments. For example:

- The European Union AI Act classifies AI applications by risk, imposing transparency and reporting requirements.

- In the United States, proposals like the AI Bill of Rights emphasize human-centric AI but are not yet law.

- China’s AI Ethics Guidelines focus on national security and data control.

| Region | Regulation | Focus |

|---|---|---|

| EU | AI Act | Risk classification, transparency |

| US | AI Bill of Rights (proposed) | Human-centric AI |

| China | AI Ethics Guidelines | Security, national AI strategy |

These discrepancies create governance complexity for multinational organizations implementing AI across borders.

Key Governance Challenges in AI Transformation

Ethical and Societal Risks

AI systems can perpetuate bias or generate unintended social consequences. Some of the key challenges include:

- Bias in algorithms: Training data may reflect historical inequities, leading to unfair outcomes.

- Workforce impact: Automation and AI-driven decision-making may displace jobs, necessitating reskilling programs.

- Privacy concerns: AI often relies on large datasets containing sensitive personal information, raising data protection issues.

Entities to Consider: OECD AI Principles, European Commission AI Act, IEEE Global Initiative on Ethics of AI

Organizational and Strategic Issues

AI governance is not only a technical issue—it’s deeply organizational. Challenges include:

- Lack of AI-literate leadership to understand risks and opportunities.

- Integration issues with legacy IT systems.

- Difficulties in measuring AI ROI and quantifying ethical and operational risk.

Technical and Operational Challenges

AI deployment introduces technical governance challenges, including:

- Explainability: Understanding model decisions and ensuring transparency.

- Model monitoring: Tracking performance drift, accuracy, and bias over time.

- Cybersecurity risks: AI models can be attacked or manipulated, requiring specialized defense strategies.

Best Practices for AI Governance

Establishing Ethical Frameworks

Ethical governance forms the foundation of responsible AI deployment because AI transformation is a problem of governance when ethical considerations are overlooked. Organizations must implement structured ethical frameworks to ensure AI projects operate fairly, transparently, and safely. Key steps include:

- Form AI ethics committees to oversee AI initiatives, review project proposals, and assess potential ethical risks. Recognizing that AI transformation is a problem of governance, these committees provide accountability and guidance for AI decision-making.

- Conduct bias audits regularly to detect and mitigate unfair outcomes in AI algorithms. Bias audits help organizations ensure AI systems do not reinforce discrimination or inequity, addressing a core aspect of why AI transformation is a problem of governance.

- Adopt responsible AI certifications from recognized authorities to demonstrate adherence to ethical standards. Certifications create external validation that ethical governance measures are in place and effective.

By embedding ethics at every stage of AI development and deployment, organizations can proactively address challenges that arise because AI transformation is a problem of governance, ensuring sustainable and responsible AI adoption.

Regulatory and Compliance Alignment

Regulatory alignment is critical because AI transformation is a problem of governance when AI initiatives operate outside legal frameworks. To manage this, organizations must:

- Ensure data privacy compliance with laws such as GDPR and CCPA, preventing unauthorized access or misuse of sensitive information. Compliance frameworks reduce legal risk and build public trust.

- Monitor changes in international AI regulations for cross-border operations, as global AI laws vary significantly. Recognizing that AI transformation is a problem of governance, organizations need dedicated teams to track emerging requirements and implement necessary adjustments.

- Implement internal audit and reporting structures to ensure continuous compliance. Regular audits help organizations identify gaps, correct errors, and maintain transparency with stakeholders and regulators.

Integrating regulatory alignment into AI governance ensures organizations can scale AI responsibly while mitigating risks, because AI transformation is a problem of governance that involves both ethical and legal accountability.

Organizational Readiness

Organizational readiness addresses the human and structural aspects of governance. AI transformation is a problem of governance when staff and leadership are unprepared to manage AI technologies effectively. Organizations should:

- Train executives and staff on AI literacy, ensuring decision-makers understand AI capabilities, limitations, and potential risks. Knowledgeable leadership is crucial for overseeing AI projects responsibly.

- Establish cross-functional governance teams that include IT, legal, ethics, compliance, and operations departments. This approach ensures that AI decisions are reviewed from multiple perspectives, reinforcing the principle that AI transformation is a problem of governance.

- Apply change management strategies to support AI adoption, build accountability, and integrate governance practices into daily operations. Change management helps employees adapt to new AI workflows while maintaining compliance and ethical standards.

By preparing the organization at multiple levels, companies can address the challenges that arise because AI transformation is a problem of governance, ensuring that AI adoption aligns with strategic objectives and societal expectations.

Takeaway

Implementing ethical frameworks, regulatory alignment, and organizational readiness ensures that organizations understand that AI transformation is a problem of governance. These practices reduce risk, maintain compliance, and promote responsible, trustworthy AI adoption across all operational levels.

Summary: Core Principles of AI Governance

- Transparency: Clear, explainable AI decisions.

- Accountability: Defined responsibility for AI outcomes.

- Risk Management: Ongoing monitoring and mitigation.

- Ethical Alignment: Avoid harm and ensure fairness.

- Continuous Improvement: Adapt policies as AI evolves.

also read: https://fundbulletins.com/framework-homeownership-making-an-offer-answers-2/

Bullet Points

- AI adoption drives efficiency but creates governance gaps.

- Ethical, regulatory, and operational governance is critical.

- Governance requires policies, cross-functional teams, and monitoring tools.

- Global AI regulation is fragmented and rapidly evolving.

- Failure to govern AI risks bias, privacy violations, and societal harm.

Managing AI Transformation Through Governance

Case Studies: Real-World AI Governance Examples

Google AI Principles

Google implemented AI Principles to ensure responsible AI deployment across its products. Key elements include:

- Avoiding unfair bias in algorithms.

- Ensuring transparency in AI systems.

- Designing for privacy and security (Google AI Principles, 2021).

These principles are enforced through internal review boards and audits, demonstrating that structured governance can mitigate risks of AI transformation.

IBM AI Fairness 360

IBM’s AI Fairness 360 Toolkit provides open-source algorithms and metrics to detect and reduce bias in AI models. Organizations using this toolkit can:

- Evaluate datasets for fairness.

- Apply debiasing algorithms before deployment.

- Monitor ongoing AI performance for ethical compliance.

This case underscores how technical tools support governance frameworks in large-scale AI adoption (IBM AI Fairness 360 Toolkit).

European Union AI Act

The EU AI Act categorizes AI applications by risk levels (high, medium, low) and mandates transparency, accountability, and human oversight for high-risk AI systems. Key takeaways:

- Organizations must maintain audit trails for AI decisions.

- High-risk AI systems require rigorous pre-deployment testing.

- Continuous compliance reporting is mandatory (European Commission AI Act, 2023).

Takeaway: These examples show that AI transformation is a problem of governance, requiring policies, audits, and technical solutions to reduce ethical, operational, and legal risks.

AI Governance Metrics and Evaluation

Monitoring AI governance is crucial for accountability and compliance. Organizations should measure:

| Metric | Description | Target |

|---|---|---|

| Bias Score | Measures fairness in model predictions | <5% |

| Compliance Audit Rate | % of AI systems reviewed annually | 100% |

| Incident Response Time | Avg. time to resolve AI system failures | <24 hours |

| Explainability Index | Percentage of models with interpretable outputs | >90% |

Implementation Tips:

- Conduct regular audits using internal or third-party tools.

- Track governance KPIs alongside operational metrics.

- Align metrics with regulatory requirements, ethical standards, and organizational strategy.

Future Trends in AI Governance

Predictive Governance

Predictive governance is emerging as a vital approach because AI transformation is a problem of governance that requires proactive oversight rather than reactive measures. Organizations are increasingly using AI systems to govern other AI systems, leveraging machine learning to identify potential ethical and operational risks before they escalate. Predictive governance frameworks can:

- Forecast potential ethical breaches by analyzing historical data, bias patterns, and decision-making trends.

- Identify model drift and bias in real-time, ensuring AI outputs remain accurate, fair, and aligned with ethical standards.

- Proactively mitigate operational risks by alerting stakeholders to anomalies, suggesting corrective actions, and triggering automated safeguards.

By implementing predictive governance, companies recognize that AI transformation is a problem of governance and that technology itself can support accountability, transparency, and compliance at scale.

Global Regulation Harmonization

Global regulatory harmonization is another critical trend because AI transformation is a problem of governance in a fragmented legal landscape. AI systems deployed across multiple countries face different laws, ethical standards, and compliance requirements, making consistent governance challenging. Key developments include:

- The OECD AI Principles, which promote human-centric AI adoption and provide global guidance for responsible AI practices.

- Cross-border agreements on data privacy and AI ethics, which reduce conflicts between regional regulations and create unified standards for multinational organizations.

- Regulatory harmonization reduces compliance complexity, ensuring organizations can scale AI systems internationally without risking legal violations or ethical lapses.

Recognizing that AI transformation is a problem of governance, organizations are increasingly investing in international compliance teams and tools to align AI strategies with emerging global frameworks.

Explainable AI (XAI) Growth

The growth of Explainable AI (XAI) is a fundamental trend because AI transformation is a problem of governance when decision-making processes are opaque. XAI frameworks make AI models more transparent, interpretable, and accountable, which is essential for ethical oversight and regulatory compliance. Key benefits include:

- Transparency in AI decision-making, enabling internal teams and regulators to understand how algorithms reach conclusions.

- Auditable AI systems, allowing ethics committees and compliance officers to detect bias, errors, or potential misuse.

- Enhanced public trust, as stakeholders can see evidence that AI models are functioning fairly and responsibly.

As regulations evolve, XAI is becoming mandatory in high-risk applications, ensuring that organizations can demonstrate adherence to governance standards. By adopting XAI, companies acknowledge that AI transformation is a problem of governance and take proactive steps to make AI deployment safe, accountable, and ethically aligned.

Takeaway

Organizations that embrace future trends such as predictive governance, global regulation harmonization, and Explainable AI recognize that AI transformation is a problem of governance. These strategies enable proactive risk mitigation, regulatory compliance, and trustworthy AI adoption across industries and borders.

Best Practices Summary

Ethical Governance

Ethical governance is essential because AI transformation is a problem of governance when AI systems operate without oversight, potentially causing bias, unfair outcomes, or societal harm. Organizations must establish AI ethics committees, perform regular bias audits, and adopt responsible AI certifications to ensure that ethical standards are consistently applied. By recognizing that AI transformation is a problem of governance, companies can embed ethics into AI design, deployment, and monitoring processes. This approach not only prevents reputational damage but also ensures AI aligns with societal values and corporate responsibility guidelines (OECD AI Principles, 2023).

Regulatory Compliance

Regulatory compliance is critical because AI transformation is a problem of governance when organizations fail to align AI projects with global and regional laws. Compliance requires adhering to GDPR, CCPA, and the EU AI Act, as well as monitoring emerging AI legislation in the US, China, and other regions. Since AI transformation is a problem of governance, organizations must implement continuous auditing, maintain transparent documentation of AI decision-making, and ensure reporting frameworks are in place. Integrating regulatory compliance into governance strategies reduces risks of legal penalties and strengthens trust with stakeholders.

Organizational Readiness

Organizational readiness ensures that leadership and staff can manage AI responsibly, because AI transformation is a problem of governance when human capabilities are not prepared for technological change. Companies must invest in AI literacy programs, change management strategies, and cross-functional teams to bridge the gap between technical deployment and strategic oversight. Recognizing that AI transformation is a problem of governance emphasizes the importance of training executives to understand AI risks and opportunities, while empowering employees to identify ethical concerns and report anomalies. Organizational readiness creates a culture of accountability and informed decision-making.

Technical Oversight

Technical oversight is a cornerstone of effective governance because AI transformation is a problem of governance when models are deployed without monitoring or explainability. Organizations should implement explainable AI (XAI) frameworks, track AI model performance, and maintain audit logs throughout the lifecycle. By acknowledging that AI transformation is a problem of governance, companies can use technical tools to detect bias, prevent operational failures, and ensure AI systems remain transparent and accountable. Combining robust technical oversight with ethical and regulatory policies ensures AI adoption is safe, reliable, and trustworthy.

Continuous Improvement

Continuous improvement is necessary because AI transformation is a problem of governance that evolves as technologies, regulations, and societal expectations change. Organizations must regularly review governance policies, update ethical and compliance frameworks, and implement lessons learned from audits or incidents. Recognizing that AI transformation is a problem of governance ensures that governance remains adaptive, proactive, and aligned with strategic objectives. Continuous improvement transforms AI governance from a reactive measure into a dynamic capability that supports responsible innovation.

Takeaway

Organizations that understand that AI transformation is a problem of governance are better equipped to reduce bias, ensure compliance, and maintain trust while scaling AI initiatives. By embedding ethical frameworks, regulatory alignment, organizational readiness, technical oversight, and continuous improvement, companies can achieve sustainable, responsible AI adoption. Recognizing that AI transformation is a problem of governance across all levels ensures long-term operational and strategic success.

FAQs

1. Why is AI transformation considered a governance problem?

Because AI adoption often outpaces policies and ethical frameworks, creating risks in accountability, bias, compliance, and societal impact.

2. What are the main challenges in AI governance?

Ethical risks, regulatory compliance, organizational readiness, technical oversight, and transparency issues.

3. How can organizations implement responsible AI governance?

By establishing ethics committees, conducting bias audits, using explainable AI, and aligning with regulations like GDPR or the EU AI Act.

4. Which regions have the strictest AI regulations?

The European Union currently leads with the AI Act, followed by developing guidelines in the US and China focusing on ethical and national security concerns.

5. How does AI governance affect organizational strategy?

Governance ensures AI aligns with business goals, reduces risks, enhances trust, and supports regulatory compliance.

6. What tools can help monitor AI governance?

IBM AI Fairness 360, Microsoft Responsible AI Dashboard, and internal auditing frameworks for transparency and bias detection.

7. How will AI governance evolve in the next 5 years?

Expect predictive governance, global regulatory harmonization, mandatory explainable AI, and integration of AI ethics in corporate strategy.

Conclusion

AI adoption is no longer just a technical challenge—it is fundamentally a governance challenge. Organizations that implement structured governance frameworks, measure compliance, and continuously monitor AI systems can balance innovation with ethical responsibility. Recognizing that AI transformation is a problem of governance ensures long-term sustainability, regulatory alignment, and societal trust.

References

- OECD AI Principles – Human-Centric AI: https://www.oecd.org/going-digital/ai/principles/

- European Commission AI Act: https://digital-strategy.ec.europa.eu/en/policies/european-approach-artificial-intelligence

- IBM AI Fairness 360 Toolkit: https://aif360.mybluemix.net/

- Google AI Principles: https://ai.google/principles/

- McKinsey State of AI Report 2023: https://www.mckinsey.com/capabilities/quantumblack/our-insights/state-of-ai